Get a personalized assessment of your operational efficiency and accelerate growth for your business.

The gap between AI hype and actual delivery is massive. In software development, AI vendors often tout success based on AI’s probabilistic nature. However, those results don’t always translate into real business impact.

Too often, vendors overpromise and underdeliver.

If you're considering outsourcing an AI project, it's important to recognize the warning signs before signing a contract.

Underdelivery has consequences that go far beyond wasted budgets. A $200K pilot can quietly turn into a $2M problem once you factor in internal teams, abandoned alternatives, and months of stalled progress.

Worse, failed initiatives can kill an organization’s appetite for future AI investments.

This guide explains the red flags to watch for before signing a contract, so you can avoid AI projects that promise transformation but never deliver.

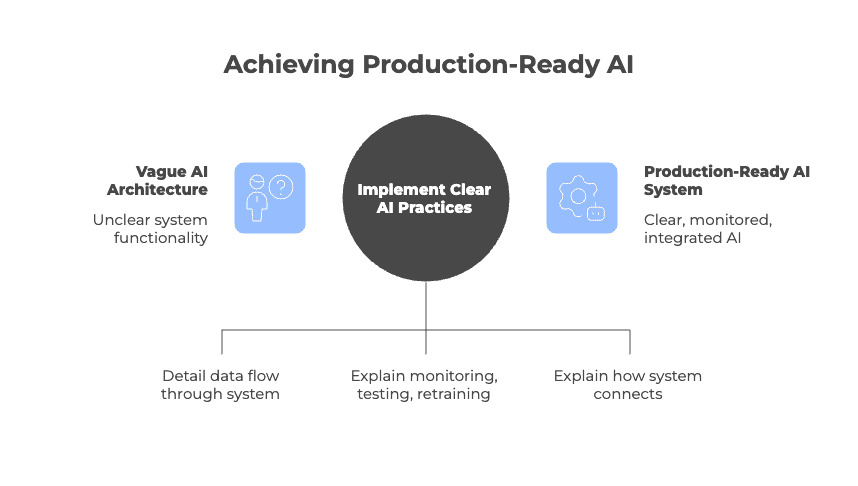

Red Flag #1: Vague AI Architecture

One early warning sign is when a vendor cannot clearly explain how their AI system actually works.

Production-ready AI requires clear data pipelines, model monitoring, retraining processes, and integration logic. If a vendor cannot explain how data flows through the system or how failures are handled, they likely lack real operational experience.

Vague explanations

Some vendors rely on buzzwords like “proprietary algorithm” or “advanced ML” without explaining how the system actually works.

In many cases, the product is simply a thin wrapper around an existing AI model with little proprietary engineering behind it.

Missing model lifecycle

A credible AI vendor should be able to explain how their system is monitored, tested, retrained, and deployed.

If they cannot discuss data quality, model drift, evaluation metrics, or rollback strategies, the system probably has not been tested in real production environments.

Weak integration clarity

Enterprise AI rarely operates in isolation. It must connect with existing systems such as Salesforce, SAP, or Snowflake.

Vendors promising “seamless integration” but unable to explain how those integrations actually work should raise concerns.

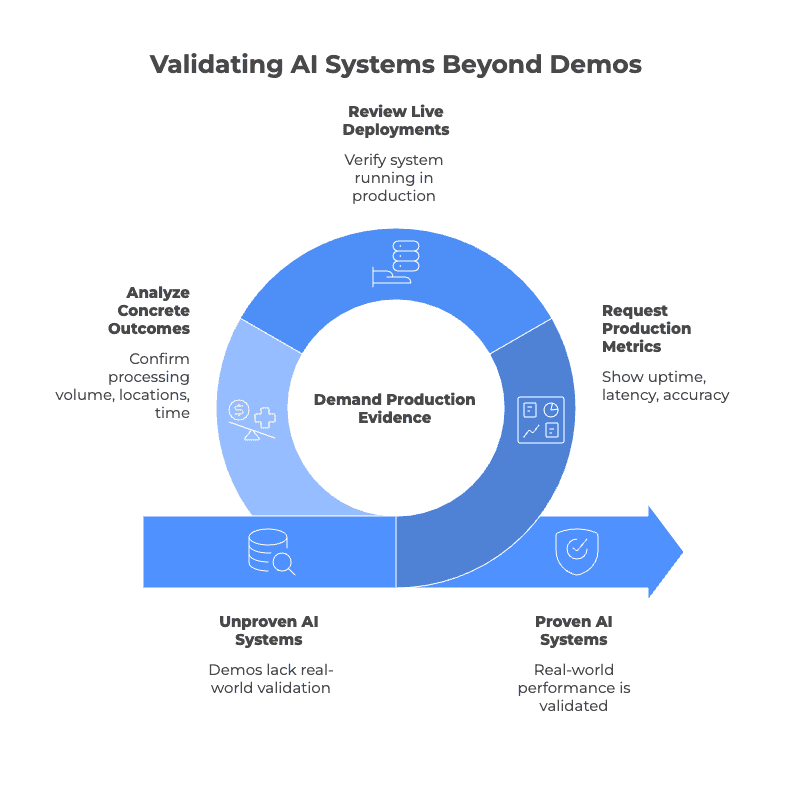

Red Flag #2: Overreliance on Demos Instead of Production Deployments

Demos often run in controlled environments with curated data. Real AI systems must handle messy data, legacy integrations, and heavy user loads while maintaining consistent performance.

Vendors who rely heavily on polished demos may not have real production deployments.

Polished demos but no live deployments

Some vendors showcase impressive demos built on curated datasets or best-case scenarios.

But they may not have a system running in a real customer environment. Many demos run only in sandbox or staging environments.

No production metrics

Production AI systems generate clear operational metrics such as uptime, latency, error rates, and accuracy trends.

Vendors should be able to show dashboards with metrics like 99.9% uptime over several months, p95 latency, and model accuracy trends over time.

If those metrics do not exist, the system likely has not been deployed at scale.

Case studies that stop at pilots

Case studies that end at a “successful pilot” or “POC” can be a warning sign.

Real deployments usually include concrete outcomes such as processing 10 million transactions monthly, running across 50 locations, or reducing processing time by 40% in production.

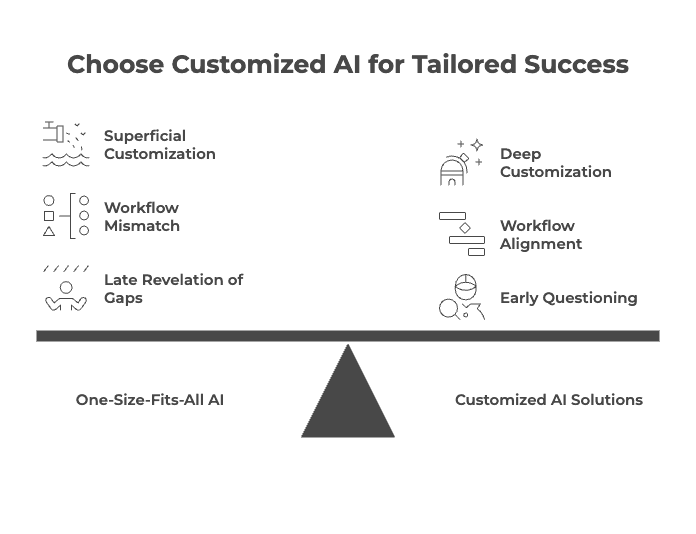

Red Flag #3: One-Size-Fits-All AI Solutions

Vendors who pitch a one-size-fits-all AI solution often reveal the gaps only after contracts are signed.

“Customization” vs reality

Some vendors claim customization simply means letting you write your own prompts. Real customization goes much deeper.

It involves adapting models to your domain data, embedding business guardrails, integrating internal data sources and access controls, and aligning the system with how your users actually work.

The workflow mismatch

Different industries have very different requirements. Legal research, healthcare triage, and retail recommendations all involve different latency needs, error tolerance, and human oversight.

When a vendor claims the same architecture works for every use case, it usually means they have not fully considered the realities of your workflow.

How strong vendors behave

Experienced AI partners ask detailed questions early. They try to understand your data quality, edge cases, failure scenarios, and existing processes before proposing a solution.

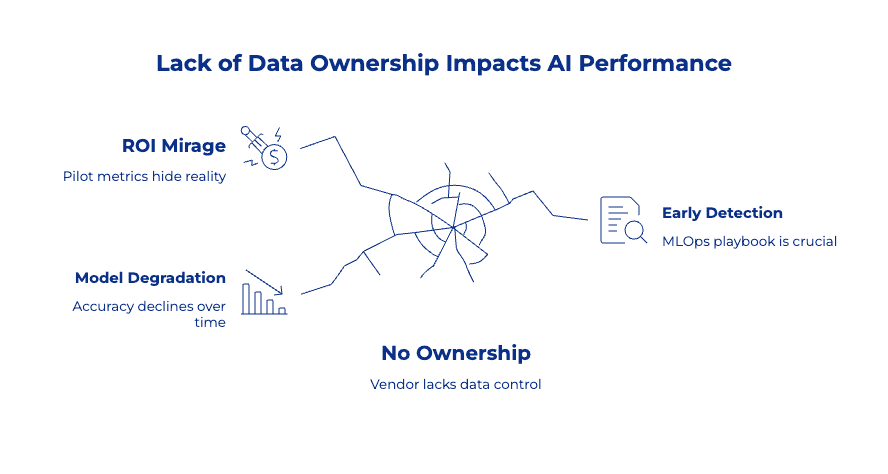

Red Flag #4: No Ownership of Data, MLOps, or Post-Launch Performance

AI systems degrade over time.

Data changes, user behavior shifts, and models drift. Without monitoring, retraining, and performance tracking, model accuracy declines and business value erodes.

The vanishing act after launch

Some vendors promise “ongoing support,” but after deployment, the project disappears into a ticketing system handled by engineers who were never involved in building the system.

There are no monitoring dashboards, no automated alerts when accuracy drops, and no defined retraining cycles. You only discover problems once users start complaining.

Model drift is inevitable

Data distributions change. Competitors adapt. Customer behavior evolves. Real AI partners plan for this from the beginning.

They build drift detection, performance benchmarking, and retraining pipelines into the architecture instead of treating them as add-on services discovered later.

The ROI mirage

Many vendors highlight strong pilot metrics but avoid long-term performance commitments. Watch for contracts with no SLAs around prediction accuracy, no defined model refresh cycles, and no shared ownership of business outcomes.

If a vendor will not stand behind sustained performance, the system may not be ready for production.

How to spot it early

Ask to see their MLOps playbook before signing.

- How are models versioned?

- How is drift detected?

- How often are models retrained?

- Who owns the feedback loop?

If the answer is “we’ll figure that out together,” you are likely hiring consultants rather than buying a solution.

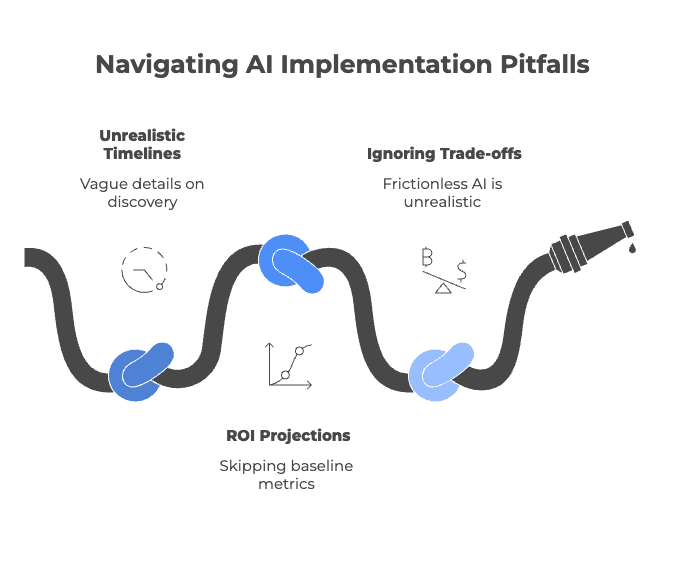

Red Flag #5: Unrealistic Timelines and Guaranteed ROI

Some vendors promise “production-ready AI in weeks.”

But when you ask about discovery, data readiness, or integration planning, the details are vague or missing.

Enterprise AI does not eliminate complexity. It only manages it.

“Production AI in weeks” without discovery

Successful AI projects require careful groundwork. Teams must prioritize use cases, assess data readiness, design system architecture, review security requirements, and plan organizational change.

Vendors who promise rapid deployment without discussing these steps are either inexperienced or deliberately oversimplifying the work. Neither is acceptable at enterprise scale.

ROI projections without clear assumptions

Credible ROI models start with baseline metrics. They explain current performance, expected improvements, adoption timelines, and cost structures.

Projections that skip these details are not forecasts. They are marketing claims presented as financial models.

Ignoring trade-offs and constraints

Every AI deployment involves trade-offs. Speed may reduce accuracy. Customization can increase complexity. Automation often requires human oversight.

Vendors who present AI as frictionless are not being optimistic. They are ignoring operational realities.

How Enterprises Can Validate AI Vendor Claims Before Signing a Contract

Before signing a contract, enterprises should ask vendors for production proof, architecture transparency, measurable performance data, and clear ownership of MLOps.

Vendors who hesitate to provide documentation or discuss failure scenarios should be treated cautiously.

1. What a Credible AI Vendor Should Be Able to Explain Clearly

A credible vendor should be able to explain how their system creates value without relying on jargon.

They should be able to address the following clearly and directly:

- Use-case specificity: What exact business problem is being solved? Why was this use case prioritized?

- Data requirements and readiness: What data is required, in what format, and what quality thresholds must it meet?

- Architecture and integration approach: How does the system integrate with existing platforms? What APIs, security controls, and governance layers are involved?

- Model lifecycle management: How is model drift detected? How often are models retrained? Who owns ongoing monitoring?

- Adoption and workflow impact: Who will use the system? What workflows change? What behaviors must shift for the solution to work effectively?

If these explanations remain vague or abstract, the underlying capability may be just as unclear.

2. What Documentation or Proof Should They Willingly Provide

A credible AI partner doesn't hesitate when asked for evidence.

Look for:

- Production case studies and not pilots, named clients, or, at a minimum, industry-specific deployments at scale

- Architecture diagrams from real deployments, where redacted is acceptable; vague or fabricated is not

- Baseline-to-outcome ROI models - with assumptions explicitly stated, not implied

- Performance benchmarks - accuracy, latency, false positive/negative rates, uptime

- Security and compliance documentation - Data handling standards, certifications, and audit readiness

If everything is "confidential" and nothing is demonstrable, that's not discretion. It's a gap.

3. Questions That Surface Overpromising Immediately

Ask these directly. Watch for hesitation or rehearsed optimism.

- "What failed in your last deployment - and how did you respond?"

Mature vendors discuss trade-offs openly. Immature ones reframe the question.

- "What must be true inside our organization for this ROI to materialize?"

This surfaces hidden dependencies the vendor may be counting on but not disclosing.

- "What would cause this project to underperform?"

If the answer is "nothing," you have your answer.

- "How long before measurable impact - realistically?"

Compare what you hear against the actual pace of enterprise change.

- "What ongoing costs begin after go-live?"

Drift monitoring, retraining cycles, integration maintenance, and user enablement rarely appear in initial proposals.

What Real AI Delivery Looks Like in Enterprise Environments

Real AI delivery in enterprise environments is iterative, measurable, and tightly integrated with existing operations.

It usually starts with one clearly defined business problem, supported by transparent architecture and ongoing monitoring after launch.

1. Transparent architecture

A production-ready system clearly maps data sources, data flows, and system integrations.

Governance controls, compliance requirements, and trade-offs such as speed versus accuracy should be visible from the beginning. Transparency prevents surprises later in deployment.

2. Phased deployment

Successful AI systems rarely appear fully formed. They are deployed in stages, with teams tracking baseline metrics and gradually expanding the scope.

Drift detection, retraining cycles, and user feedback help improve performance over time.

3. Shared ownership after launch

Enterprise AI requires shared responsibility between the vendor and the client.

Both sides should define operational roles, review performance regularly, and refine the system as data and business needs evolve.

In practice, go-live is only the beginning of the real work.

Conclusion: Avoiding AI Failure Starts With Knowing What to Reject

Ignoring AI vendor red flags can turn promising initiatives into stalled experiments that drain budgets and erode trust. Real value only emerges when AI systems move beyond pilots and are monitored, retrained, and managed in production.

If you're evaluating an AI initiative, take the time to validate architecture clarity, production readiness, and long-term operating costs before signing a contract.

Sometimes it also helps to get an independent technical review.

At Imaginovation, we often help teams assess AI architectures, evaluate vendor claims, and identify delivery risks before projects move forward.