Get a personalized assessment of your operational efficiency and accelerate growth for your business.

Any AI coding tool can generate syntactically correct code when you give it a prompt. But can they build enterprise software? And the real question is, do we even need software developers anymore?

Software development has always been more than designs and code. It involves security, understanding what GDPR, SOC 2, and internal company policies demand, and knowing who is responsible when something fails.

It requires edge-case reasoning and institutional knowledge that no prompt can offer. Enterprise software needs someone to make architectural judgments and lay out a plan for how systems should be structured over time.

But AI has changed how developers work.

The role of a developer has shifted from writing code to validating, orchestrating, and owning outcomes.

And that shift is exactly why enterprise companies still need software developers, even if AI writes the code.

What "AI Writing Code" Actually Means in Enterprise Environments

When we say AI writes code, what we really mean is this: you give a natural language prompt, and the tool returns syntactically correct output. It handles boilerplate, unit tests, and standard functions reliably.

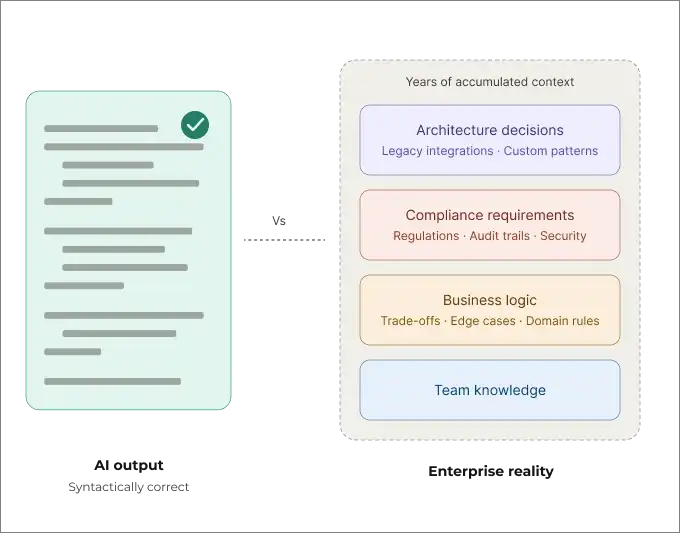

But that doesn't mean it understands your system.

It doesn't know your architecture, your compliance requirements, or the business logic that has evolved over years of trade-offs. In enterprise environments, that's the gap between a useful productivity tool and something that can build production software.

Enterprise systems live far outside the neat boundaries of a prompt. Production code carries years of accumulated logic, non-standard integrations, regulatory constraints, and architectural decisions made long before the current task existed. That context rarely lives in one place, and it's never captured in a single prompt.

Modern models do more than pure pattern matching. They exhibit real reasoning capabilities. But enterprise software depends on how your specific system behaves, what constraints it operates under, and how teams maintain it over time. No amount of reasoning closes that gap without context.

AI can produce code that is correct in isolation. Enterprise software has to be correct in context: within a particular system, under specific rules, maintained by real teams.

Why AI Code Generation ≠ Enterprise Software Development

Code generation using AI is autocomplete at scale. Enterprise software development is judged under pressure. It's knowing what to build, why it holds together architecturally, and who answers when it breaks at 2 a.m.

AI can produce code, but it cannot own a system, a decision, or a consequence, at least for now.

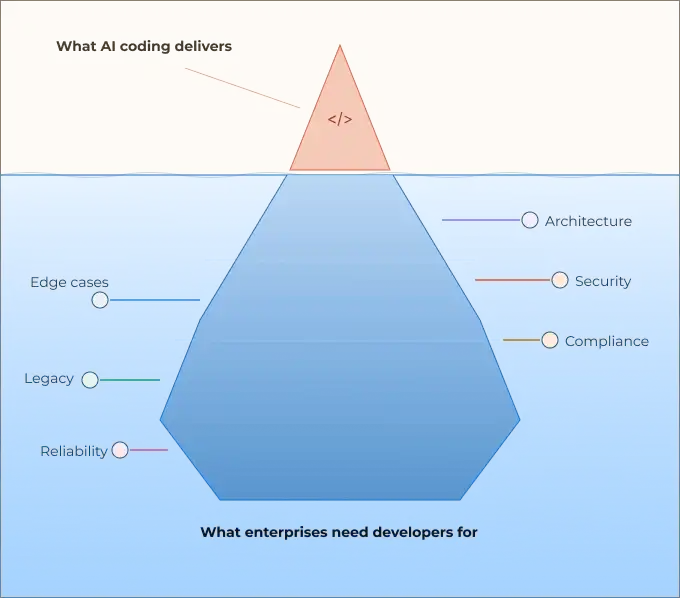

The gap becomes clearer when you look at what enterprise development actually involves beyond writing code: designing systems, owning outcomes in production, and operating within constraints that AI cannot see.

Let's break that down.

1. Writing Code

Code generation is impressive at the line-and-function level. With a well-scoped prompt, a model can produce anything from a working API endpoint to a database query, often faster than a senior engineer typing from scratch.

But writing new code is a surprisingly small part of a developer's job. IDC's 2024 report found that application development accounts for roughly 16% of developer time. Robert C. Martin's widely cited observation puts the reading-to-writing ratio at over 10 to 1.

The rest involves reading existing code, understanding intent, tracing failures, negotiating trade-offs, and making calls that have no clean answer.

2. Designing Systems

System design is where enterprise complexity becomes unforgiving. A prompt cannot tell an AI things like:

- The payments service was built by a team that no longer exists, and any change to it requires coordination across three compliance teams

- The company chose eventual consistency three years ago for performance reasons, and reversing that decision now means re-migrating 400 million records

- The new microservice you're generating will be owned by a team in a different time zone with different on-call rotations

Enterprise systems are not greenfield. They carry technical debt from decisions that were postponed, and integrations that exist only because two systems were forced together after an acquisition.

Good system design in this environment requires historical context (why past trade-offs were made), constraint mapping (non-negotiable regulatory, contractual, and operational boundaries), failure mode reasoning ("How does this fail, and how badly?"), and organizational awareness (who depends on this, and who will be broken by changing it).

An LLM generating code has none of this. It reasons about the code in front of it, not the system behind it.

3. Owning Outcomes in Production

Production is not a test environment. In enterprise software, a bug isn't a failed unit test. It is a revenue event, a compliance incident, a customer trust failure, or, in regulated industries, a legal exposure.

The cost of a production failure is measured in SLA breaches, incident reports, and post-mortems with executive visibility.

In enterprise environments, ownership means:

- You are accountable when the system behaves unexpectedly

- You carry the context of past failures and use it to design this one differently

- You made a judgment call on a trade-off with no perfect answer, and you stand behind it

- You will be the one debugging it at 2am when the monitoring alert fires

Code generation produces output. It does not produce accountability. It has no stake in correctness beyond the prompt, no memory of the last outage, and no ability to be paged.

4. The Enterprise Multiplier

All of this is compounded by scale. Enterprise software means:

- Distributed ownership: multiple teams working together with their own standards and incentives

- Regulatory surface area: GDPR, SOX, HIPAA, and PCI-DSS compliance is built into the architectural decisions

- Longevity requirements: systems that will operate and evolve over 10 to 20 years

- Integration density: not three services talking to each other, but hundreds, often across organizational and vendor boundaries

In this environment, the dangerous illusion is mistaking code output for engineering judgment. A junior developer who generates a working feature with AI assistance has not automatically developed the capacity to design the system it lives in, anticipate how it will fail, or own what happens when it does.

The code is the easy part. The enterprise is the hard part. And AI, as it stands, only helps with one of them.

What Enterprises Still Need Software Developers For

Enterprises still need developers because software that runs at scale carries legal liability and cannot be allowed to fail. It requires human judgment, institutional memory, and accountability, none of which can be prompted into existence or delegated to a model.

1. System Architecture and Long-Term Design

Architecture is a sequence of irreversible decisions made under incomplete information.

Developers aren't just choosing patterns; they're encoding organizational constraints into service boundaries, data ownership, and coupling decisions that will either pay dividends or extract a tax for the next decade.

AI can generate a service. It cannot decide where that service's boundary should be, or why that boundary will still make sense when the company doubles in size, changes direction, or acquires a competitor.

2. Security, Compliance, and Accountability

Security is an architectural property, not a feature layer.

Threat modeling happens at design time, and in regulated environments (SOX, HIPAA, PCI-DSS), every decision creates a legal and financial trail that a named human must own and defend.

The data supports this concern. Veracode's 2025 GenAI Code Security Report found that AI-generated code contains 2.74x more vulnerabilities than human-written code, tested across 100+ LLMs and four programming languages. A separate 2026 study found that one in four AI code samples contains a confirmed security vulnerability, with 45% introducing OWASP Top 10 flaws.

If a regulator asks why customer data was handled a certain way, "the model suggested this pattern" is not an answer. AI-generated code has no legal standing, no liability, and no awareness of what noncompliance actually costs.

3. Exception Handling and Edge Cases

The expected path is easy. The 99th-percentile failure is where enterprise systems earn their credibility.

It's where payment gateways time out mid-transaction, where downstream systems send malformed responses under peak load, and where a database failover happens during a live migration.

Experienced developers know these failures firsthand. They don't just code defensively against edge cases. They've lived through them.

They know the third-party APIs that lie about their error codes and the ones where the failure cascades catastrophically. This is not in any training data.

4. Legacy System Integration

Most enterprise software lives alongside systems built in technologies that predate the current team, sometimes by decades: COBOL batch processes feeding modern APIs, ERP systems with undocumented side effects, mainframe data models abstracted behind fragile service layers.

This work is entirely about what isn't documented. Developers doing it carry historical constraints from previous integrations, know undocumented risks, and understand which assumptions will silently break if violated. AI only sees what it's shown.

5. Reliability, Monitoring, and Incident Response

Shipping code is the start. The real work is keeping it running: designing for visible failure, calibrating alerts that signal rather than add noise, and building dashboards that tell the on-call engineer what broke and why in seconds.

When incidents happen, a developer investigates, decides whether to roll back or patch forward, informs stakeholders, and runs the post-mortem that prevents recurrence.

This cycle of designing, observing, failing, and learning has consequences that a code generator cannot produce.

What Goes Wrong When Enterprises Over-Rely on AI-Written Code

Over-reliance on AI-written code doesn't fail loudly. It fails gradually. Systems accumulate debt quietly, and the gaps only surface under pressure: during an incident, an audit, or a security breach.

1. Technical Debt

AI generates code that works for the prompt, not for the system. Without architectural judgment guiding each output, the codebase accumulates inconsistent patterns and redundant abstractions that are locally reasonable but globally expensive.

And it happens fast. Debt created at the pace of AI arrives faster than any team can absorb.

Teams that rush to ship without owning the design decisions end up with a codebase nobody fully understands, one that costs more to refactor than it cost to write.

2. Silent Failures

AI-generated code tends to handle the happy path well and the failure path poorly. Edge cases that weren't in the prompt simply aren't handled, and unlike a syntax error, a missing failure mode doesn't announce itself until conditions are exactly wrong.

Silent failures are the most dangerous class of enterprise bugs. A payment that processes twice, a record that gets partially written, an alert that never fires: these don't surface in testing.

They don't trigger monitoring. They're discovered through downstream consequences, usually long after the damage is done.

3. Security Risks

Models generate code from patterns in their training data, which includes insecure patterns, outdated libraries, and deprecated approaches. Without a developer actively threat-modeling the output, vulnerabilities ship alongside features: SQL injection surfaces, secrets get hardcoded, input validation gets skipped.

The subtler risk is false confidence.

AI code that passes code review and automated scanning can still contain architectural vulnerabilities: violated trust boundaries, open privilege escalation routes, data exposure baked into the design. These require human security reasoning, not linting.

4. Loss of System Understanding

The greatest risk isn't technical. It's organizational.

When developers use AI to generate code they don't fully read, debate, and own, institutional knowledge of how the system works stops accumulating.

Over time, developers lose the ability to reason about the system as a whole. The result is a codebase nobody understands and nobody can safely change.

Some enterprises are already implementing countermeasures: mandatory code comprehension reviews, pair programming alongside AI tools, stricter PR standards.

But without deliberate effort, this remains a structural fragility that builds quietly until something forces the team to confront it.

How the Role of Enterprise Developers Is Changing

The enterprise developer's role is evolving from writing code to managing it, and from manual implementation to AI-assisted orchestration, anchored by the judgment and accountability only a human can provide.

1. From Writing to Validating

The primary output of a developer is no longer lines of code; it's decisions about code. That means reading AI-generated output critically and identifying what's missing or subtly wrong, approving only what's fit for a production system that carries real consequences, and catching security gaps, edge case omissions, and architectural misalignments before they ship.

This requires more judgment, not less. A developer who can't write code is certainly not equipped to validate it.

2. From Implementing to Orchestrating

Developers are increasingly responsible for composing systems from AI-generated components, third-party services, and internal platforms. The implementation work decreases; the integration and coordination work increases.

That means managing contracts and interfaces between assembled components, ensuring coherent behavior across systems built from different sources, owning failure at the seams where components meet and assumptions break, and coordinating across teams, vendors, and platforms rather than authoring every layer.

The craft shifts from authorship to architecture, and from execution to design.

3. From Speed to Safety and Resilience

AI increases the speed of code production. The burden on enterprise developers is making sure that speed doesn't compromise safety. That means owning:

- Observability: instrumentation and logging built in by design, not retrofitted

- Recoverability: rollback paths and failure boundaries defined before they're needed

- Defensibility: architectural decisions that hold up under regulatory or post-mortem scrutiny

- Pacing: knowing when to slow down because the risk profile demands it

The developer's value proposition in an AI-augmented environment isn't velocity. It's the judgment that keeps velocity from becoming liability.

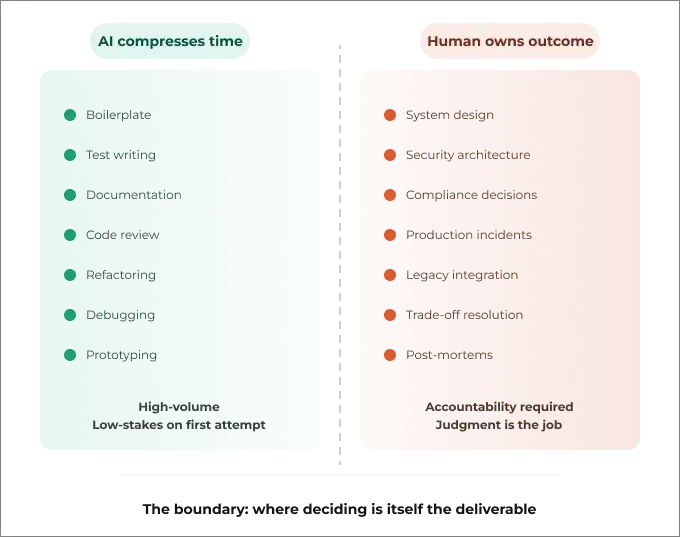

When AI Should Assist Developers, Not Replace Them

AI delivers real leverage when it operates as a tool under human direction. The moment it's treated as a decision-maker on architecture, security, or compliance, the enterprise has substituted accountability with automation and judgment with probability.

Where AI Delivers Leverage

AI is most valuable in the parts of development that are high-volume and low-stakes on the first attempt. Strong use cases include:

- Boilerplate generation: scaffolding, CRUD operations, repetitive patterns across services

- Test writing: generating unit and integration test cases from existing function signatures

- Documentation: drafting inline comments, API docs, and README content from code context

- Code review assistance: surfacing obvious issues, inconsistent patterns, and style violations

- Refactoring support: restructuring well-understood code with clear before/after intent

- Debugging acceleration: narrowing down error sources and suggesting candidate fixes

- Prototyping: rapidly exploring what a solution might look like before committing to an approach

In all of these, AI compresses time. A developer still owns the output but reaches it faster.

Where Human Judgment Is Non-Negotiable

There is a clear set of decisions where removing the human doesn't just introduce risk. It eliminates the accountability structure the enterprise depends on:

- System design and service boundaries: decisions that will constrain the codebase for years

- Security architecture: threat modeling, trust boundaries, privilege design

- Compliance decisions: what data is collected, how it's stored, who can access it

- Production incidents: diagnosis, escalation, rollback calls under pressure

- Legacy integration: navigating undocumented behavior and minimizing blast radius

- Trade-off resolution: choosing between competing constraints with no clean answer

- Post-mortems and learning: extracting institutional knowledge from failures

These aren't tasks that AI does poorly. They're tasks where the act of a human deciding is itself part of what the enterprise requires.

Why Human-in-the-Loop Matters in Enterprises

In consumer software, a bad AI-generated decision produces a bug. In enterprise software, it can produce a compliance breach, a security incident, or an outage with contractual consequences. The stakes change what the loop must contain.

Human-in-the-loop in an enterprise context means no AI-generated code enters production without developer sign-off. It means architecture decisions precede AI-assisted implementation, not the other way around.

Every system decision traces back to a named engineer who understood and endorsed it. AI output is treated as a draft, not a deliverable. And monitoring, alerting, and incident response remain human-designed and human-executed.

The goal isn't to slow AI down. It's to make sure the speed AI provides doesn't sever the connection between decisions and consequences, which is the connection that enterprise software, regulation, and organizational trust are all built on.

Conclusion

Software teams still matter because the hardest parts of building technology (architecture, accountability, institutional memory) have always required human decision-making.

The new reality is straightforward: improve the tools available to developers, don't replace them. Use AI to sharpen thinking, not substitute for it. And deploy with the understanding that someone is ultimately going to own the outcome.

The organizations that build the most durable software in the next decade won't be the ones generating the most code. They'll be the ones that pair AI's speed with human judgment, and know exactly where the boundary between the two should sit.

If you need a realistic partner to help you figure out where AI is a net positive in your engineering organization and where it introduces risk, the team at Imaginovation can help you build that roadmap.