Get a personalized assessment of your operational efficiency and accelerate growth for your business.

Did you know, around 24% of the first-year college students in North America won't come back for year two. For online programs, it's even worse. The dropout rates hit 40–50% before students even reach the halfway mark.

That's not just a statistic. It's debt with no degree attached. It's $10,000–$25,000 in forfeited tuition per student walking out the door. And for the students themselves, it's a confidence hit that lingers.

Quick Stats: AI in Higher Ed

| $404B | +18% | 80% |

|---|---|---|

|

Global EdTech market size, 2025 HolonIQ, 2024 |

Avg. dropout rate reduction with AI gamification in e-learning OECD, 2022 |

Higher-ed administrators motivated to adopt AI for efficiency Ellucian/EDUCAUSE, 2024 |

Here's the thing: this problem is fixable with AI-driven platforms. And by AI-driven platforms, I don't mean those one-off chatbot experiments.

An AI-integrated education platform can flag student disengagement weeks before they stop logging in. It can adjust content to individual pace, and alert advisors before a withdrawal form ever gets filed.

Let's explore how AI in education can improve engagement and retention. We will discuss: adaptive learning systems, intelligent tutoring systems, and AI-powered LMS platforms with built-in predictive analytics.

Let's start with the main question first.

Why Are Student Engagement and Retention Dropping?

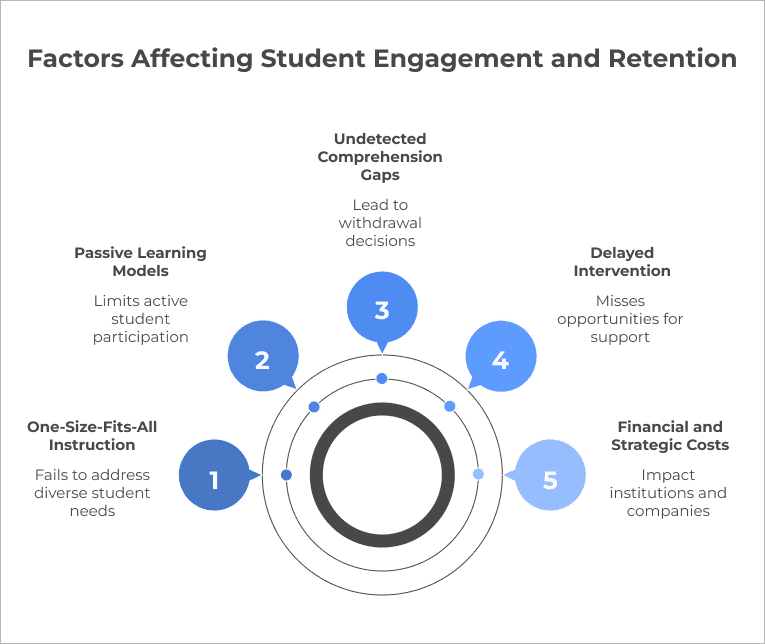

Retention and student engagement rates are going down, and this is due to the passive way in which students are taught through the existing learning models and processes.

What this fails to take into account is the learning rate of the individual student as well as any gaps in their understanding.

What's Driving Disengagement in North American Classrooms?

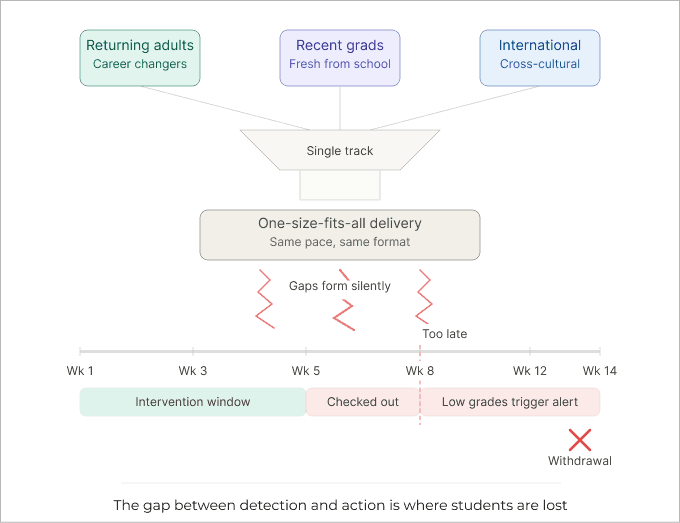

The retention crisis is less of a motivation problem and more of a structural one. The core issue is the adoption of a one-size-fits-all instructional delivery, which fails to address the needs of returning adults, recent graduates, and international students all in the same course track.

In such scenarios, comprehension gaps go undetected until they become withdrawal decisions.

Moreover, by the time low grades trigger an advisor call, the student has already mentally checked out. Effective intervention needs to happen within the first three to five weeks; most institutions have no mechanism to act that early at scale.

What Does It Actually Cost When Retention Fails?

Institutions lose $10,000–$25,000 per departing student in forfeited tuition and recruitment. At scale, this adds up to a staggering annual burden for any mid-sized university.

EdTech companies live and die by completion rates — the primary investor KPI. When churn consistently exceeds benchmarks, it signals a broken product. Several notable down-rounds since 2022 trace directly to platforms that could acquire learners but not keep them.

Corporate L&D teams face a subtler but equally tangible cost. If 60% of employees enrolled in an upskilling program never finish, the organization spends the budget without gaining the capability — and the entire investment goes unrealized.

Retention rates by learning modality

| Modality | Avg. retention rate | Avg. course completion | Risk profile | Primary failure point |

|---|---|---|---|---|

| Traditional in-person | 72–76% | 65–70% | Moderate | Fixed pacing; limited advisor bandwidth |

| Basic online (LMS only) | 48–60% | 40–55% | High | Passive content; no early-alert system; social isolation |

| Hybrid (blended, no AI) | 58–66% | 52–63% | Moderate–high | Inconsistent engagement across modalities |

| AI-adaptive platform | 76–85% | 72–82% | Low–moderate | Implementation quality; change management |

How Do AI-Driven Education Platforms Actually Work?

AI-driven educational platforms are data-driven, and for accurate results, they collate behavioral data.

The gathered data can then be fed into algorithms to help automate the personalized delivery of content and notifications. There can also be an implementation of a feedback loop, which would allow for real-time response to each student's progress.

What Is an AI-Driven Education Platform?

This AI-based education system operates using AI as the primary engine that constitutes the heart of the learning process. It monitors behavior, models the current level of knowledge of the learner, and adjusts the content being provided.

That architecture operates across three layers:

Every click, pause, rewatch, quiz attempt, and response time is logged as a behavioral signal—not just whether a student completed a task, but how.

ML models process these signals to build a live learner profile, identifying knowledge gaps, predicting dropout risk, and estimating optimal content difficulty.

The system responds by adjusting content paths, triggering nudges, alerting advisors to at-risk learners, and automatically adapting pacing.

The key distinction is AI-native versus LMS-with-AI-bolted-on.

Classic LMSs like Moodle, Canvas, and Blackboard were designed for content dissemination and grading.

By contrast, AI is generally incorporated into the system via plugins that serve as chatbots and analytics engines but don't affect the preset course structure.

In AI-powered platforms, everything operates on the principle of data → intelligence → decision, with each step influencing the next.

Every action generates data, and data fuels the AI models that provide insights for further decisions.

AI technology → function → impact on engagement and retention

| AI technology | Function | Impact on engagement | Impact on retention |

|---|---|---|---|

| Machine learning, adaptive paths | Personalizes content sequence and difficulty in real time based on individual performance signals | Higher relevance; reduced frustration | Fewer dropouts from overwhelm |

| NLP, conversational tutoring | Powers AI tutors and chatbots that respond to free-text questions, explain concepts, and give formative feedback at scale | Active participation; immediate support | Reduces isolation in async learning |

| Predictive analytics, early warning | Scores each learner's dropout risk using behavioral, academic, and engagement signals; triggers advisor alerts before disengagement becomes withdrawal | Flags passive learners early | Enables week-3 intervention |

| Learning analytics, dashboards | Surfaces cohort-level and individual engagement data to instructors and L&D managers in real time | Instructor awareness | Supports targeted outreach |

Which Platform Features Have the Biggest Impact on Engagement?

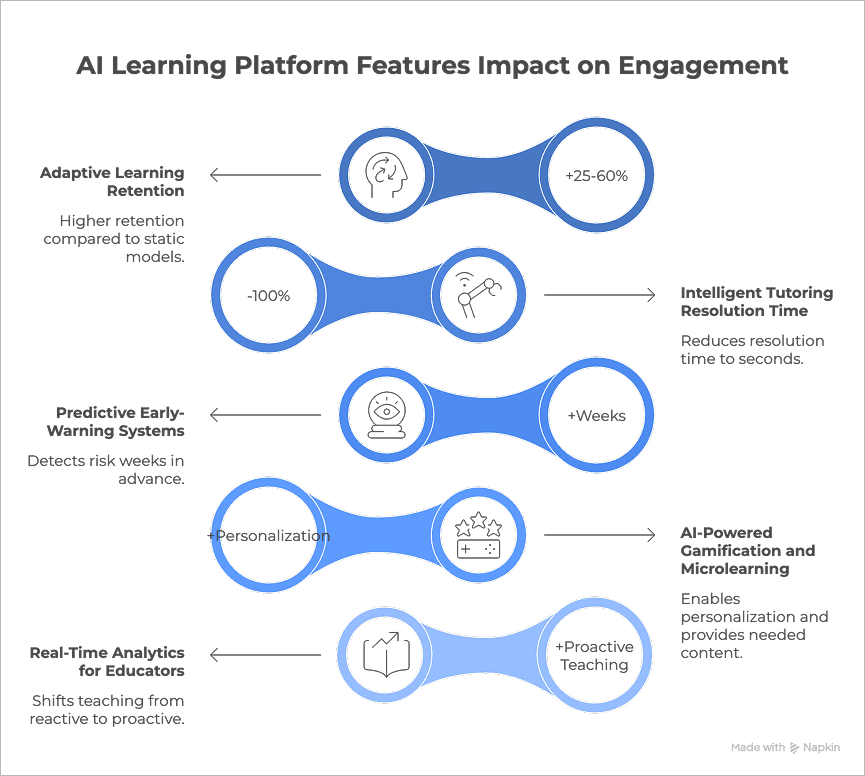

The most effective AI learning platforms integrate key competencies, including adaptive learning, intelligent tutoring, predictive alerts, AI-powered gamification with microlearning, and real-time analytics.

Together, they drive engagement through personalization, early risk detection, and precisely timed action.

Adaptive Learning Paths

Works constantly on tweaking difficulty and pacing for learners to stay in the "flow zone." It is a proven lever, supporting 25–60% higher retention compared to static learning models.

Intelligent Tutoring and On-Demand Support

Most drop-offs occur at unresolved "stuck moments." AI tutors reduce resolution time to seconds, as they work on diagnosing gaps — not just delivering answers — bringing instructor-level support at scale.

Predictive Early-Warning Systems

Disengagement builds gradually through behavioral signals like login patterns and time-on-task. The advantage of these systems is that they detect risk weeks in advance. The insights support proactive, targeted, and timely interventions.

AI-Powered Gamification and Microlearning

Gamification that operates under a one-size-fits-all approach tends to work in the beginning but becomes less effective in the long run. AI-driven gamification enables personalization, while microlearning delivers exactly what each learner needs next — keeping them coming back.

Real-Time Analytics for Educators

Shifts teaching from reactive to proactive. Live dashboards surface learning gaps and disengagement early, allowing educators to adapt in real time and personalize support at scale.

Feature comparison

| Feature | Engagement Impact | Retention Impact | Evidence |

|---|---|---|---|

| Adaptive Learning | High | High (25–60%) | Adaptive learning studies |

| Intelligent Tutoring | High | High | ITS & AI tutor research |

| Early-Warning Systems | Moderate | High | Student success data |

| AI Gamification | High | Moderate | Engagement studies |

| Educator Analytics | Moderate | Moderate | Learning analytics research |

Real-World Results: How Leading Platforms Measure Up

The evidence base for AI in education is strengthening, but results vary sharply based on how deeply the technology is embedded into instruction. The following snapshots highlight measurable impact across segments:

- K-12: DreamBox Learning reports ~20% improvement in math proficiency and up to 1.6 years of academic growth in a single school year among regular users. This is supported by ESSA "Strong" evidence.

- K-12/Blended: Carnegie Learning (MATHia) has met the ESSA criteria in Tier 1. From the EMERALDS study, higher module completion rates correlated with better performance in Algebra I, particularly among low performers.

- Higher Education: Coursera (Coursera for Campus) yielded a license usage rate of 300% and a rating of 4.6 out of 5 points among learners, in the context of integrating the program into courses offered by Prince Sultan University.

- Corporate Training: Kyron Learning showed a 16% improvement in comprehension within a 30-minute training session and a teacher recommendation rate of 93%. On the other hand, results were poor when the program was used as an optional supplement.

What Do the OECD's 2026 Findings Tell Us?

According to the OECD Digital Education Outlook 2026, general-purpose AI tools improve performance in the short term but fail to create lasting learning gains. Students completed tasks 48% more successfully with AI, yet performance dropped 17% when AI access was removed, a phenomenon described as the "false mastery" effect.

In contrast, purpose-built educational AI systems, designed with pedagogy, scaffolding, and feedback loops, demonstrate more durable learning outcomes.

Ultimately, pedagogical intent matters more than raw model power. AI platforms that deliver sustained impact embed learning science, structured progression, retrieval practice, and metacognitive support directly into the product architecture.

How to Evaluate or Build an AI Education Platform

When it comes to building custom or buying off-the-shelf, it's best to choose depending on where your competitive advantage lies.

Build when your learning model or proprietary data is your USP. When differentiation comes from pedagogy, personalization logic, or unique datasets, owning the stack matters.

On the other hand, buy when speed-to-market is critical, and AI is an enabler, not the core product. There is also an option to go hybrid — the sweet spot — as you layer custom AI capabilities on top of an existing LMS, combining speed with differentiation.

Build vs. Buy Decision Matrix

| Build custom (Proprietary AI platform) | Buy off-the-shelf (SaaS / vendor platform) | |

|---|---|---|

| Strategy | ||

| Best when | Data is your USP; learning model is core IP | Platform isn't your differentiator; speed matters |

| Avoid when | No ML team; tight runway; unproven pedagogy | Strict data sovereignty or unique LMS workflows |

| Economics | ||

| Time-to-market | 12–24 months | 1–3 months |

| Upfront cost | High (eng. team) | Low–medium |

| Long-term cost | Lower (owned) | Ongoing licensing |

| Technical | ||

| Data control | Full ownership | Vendor-dependent |

| Customization | Unlimited | API / config only |

| Scalability | You manage infra | Vendor-managed |

| Compliance | ||

| FERPA / COPPA | Your responsibility to engineer | Vendor certifications; verify before signing |

| State privacy laws | Full control over data residency | Review DPA carefully |

Hybrid option: buy an LMS foundation, build a custom AI layer on top, which captures speed-to-market while preserving data ownership.

What to Look for in a Platform Partner

Evaluation must go beyond features, and it is essential to focus on infrastructure, pedagogy, and compliance:

- Technical criteria: Robust data infrastructure, scalable ML pipelines, API extensibility, and seamless integration with existing systems

- Pedagogical criteria: AI grounded in learning science — look for evidence of scaffolding, feedback loops, and adaptive pathways (not just content generation)

- Compliance: Adherence to FERPA, COPPA, and relevant state or regional data privacy regulations

How to Measure ROI After Implementation

ROI in AI education is multi-dimensional, spanning engagement, retention, and business outcomes.

ROI metrics after implementation

| Dimension | Metrics | What It Signals |

|---|---|---|

| Engagement | Active learning time, interaction depth, assessment velocity | Are learners meaningfully engaging? |

| Retention | Completion rate, semester persistence, NPS | Are learners continuing and satisfied? |

| Learning Impact | Skill progression, assessment improvement | Is actual learning happening? |

| Business (EdTech) | User retention, time-to-value, LTV/CAC | Is the model sustainable and scalable? |

The most effective AI education platforms are not defined by technology alone, but by how tightly that technology aligns with learning outcomes and business goals.

What Risks and Challenges Should You Prepare For?

AI in education isn't about whether risks exist; it's more about whether there's a plan in place before deployment. Without a clear plan, most implementations end up reacting after the damage is already done.

Risk register: key challenges and mitigations

| Challenge | Why it matters | Mitigation |

|---|---|---|

| Data privacy exposure | FERPA applies to federally funded institutions but has loopholes. SOPIPA restricts behavioral marketing to K-12 students, yet enforcement varies. Routing student data through AI vendors without a proper DPA creates immediate legal risk. | Sign compliant DPAs with every vendor before deployment. Conduct regular audits against FERPA and applicable state laws. Use on-premises or data-residency-restricted deployments for sensitive data. |

| Algorithmic bias | AI trained on narrow datasets can underserve students of color, English language learners, and those with IEPs. The risk is often subtle and cumulative, reinforcing inequities over time. | Require disaggregated performance data (by race, language, IEP status). Conduct equity audits after initial deployment. Maintain human oversight for high-stakes decisions. |

| Vendor concentration | Over-reliance on a small set of platforms creates systemic vulnerability. Pricing changes or vendor exits can disrupt entire systems. | Ensure interoperability (IMS Global, xAPI). Avoid single-vendor lock-in. Pilot solutions on short-term contracts before long-term commitment. |

| Low educator adoption | Many educators receive little to no AI-related guidance. Tools introduced without support often lead to resistance or misuse. | Provide clear AI-use policies before rollout. Invest in continuous training, not one-time sessions. Involve educators in tool selection. |

| Automation over-reliance | While AI can scale feedback and personalization, student outcomes still depend on human interaction. Over-automation risks disengagement. | Use AI to handle routine tasks and free up teacher time. Define minimum levels of human interaction. Track engagement beyond AI metrics (e.g., participation, attendance). |

Build an AI-Driven Student Platform with Imaginovation

We build AI-powered education platforms that adapt to how people actually learn, using personalized learning paths, early risk detection, AI tutors, and real-time insights. Everything is designed around your learners, your data, and your goals.

Whether you're launching a new EdTech product or improving learning across an institution, we help you create platforms that drive real engagement, improve retention, and deliver measurable outcomes. Not just features.